This project was created as part of a university assessment, in semester 2 of 2017. We were to design and build an interactive VR environment. It was highly encouraged that we use a new type of technology to explore. Ultimately I chose to create a narrative that was controlled via vocal commands.

Main Product:

- Unity: Game Engine

- SteamVR Plugin for Unity

- Blender: 3D Modelling

- Google Blocks: 3D Modelling

- Audacity: Audio recording and editing

- VoiceAttack: Voice recognition software

Playthrough Demo:

- Ice-cream Screen Recorder

- Adobe Premiere Pro

- Youtube

During the designing and building process, digital scrapbooks were kept throughout to collect all the information I stumbled upon. I have included screenshots from the original scrapbooks below.

Scrapbook 1

For the first stage of the main project, I conducted a large amount of research into what I could actually create for the final project. I knew from the start that I wanted to involve some form of interactive storytelling, and after a recent Star Trek marathon, I wanted to aim for the holodeck. So this meant voice commands and a narrative.

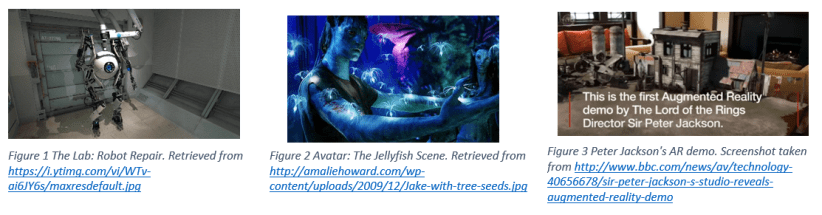

For the narrative aspect, I explored a variety of current content that involved 3D/VR/AR narratives. I stumbled upon the following examples in my first round of research.

To find out more about vocal commands and how this kind of software works, I spoke with a colleague to get an overview and how I could integrate this into a game. He sketched up some basic details for me, which helped take the pressure off of how to go about implementing this feature into the project. After all, I had never even looked into this before. I conducted some further research into which voice command software to use. My final decision was to work with VoiceAttack, which is a software used by gamers; and there’s a free version available.

To find out more about vocal commands and how this kind of software works, I spoke with a colleague to get an overview and how I could integrate this into a game. He sketched up some basic details for me, which helped take the pressure off of how to go about implementing this feature into the project. After all, I had never even looked into this before. I conducted some further research into which voice command software to use. My final decision was to work with VoiceAttack, which is a software used by gamers; and there’s a free version available.

Finally, I decided to build this narrative for the HTC Vive.

Scrapbook 2

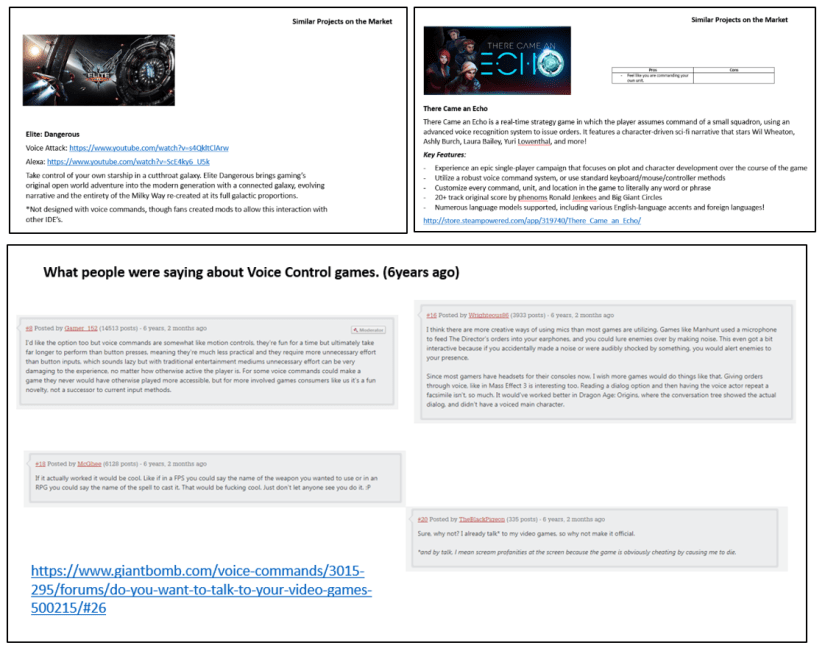

Within this stage of the research, I began to focus more on the angle of the pitch and what I will be presenting at the end. I researched more on the types of projects that included either an interactive narrative or vocal commands. I also looked into some comments online that were made in regards to voice commands. This helped me in the design process by knowing what people liked and didn’t like.

The following three images are screenshots of pages from Scrapbook 2.

Results from research: There seemed to be a want for the technology, however, the tech has not yet caught up to the actual needs. There are still a few bugs or issues with accents, and while this is rapidly improving we are not quite there yet. Currently, game makers are experimenting with different ways to implement voice commands as controls. Though many users end up being frustrating for the user rather quickly. So, I’m in for a rough project that isn’t going to do what I originally thought 😛

Also at this point, I decided that I was going to set the story within a fantasy/sci-fi genre, in a world filled with science and magic options. (Think star wars, but not star wars). However, this did eventually change.

Scrapbook 3

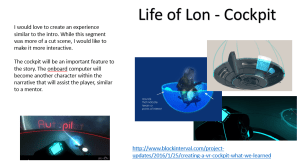

It was during this stage my project’s narrative direction turned. After playing the Life of Lon demo and Titans of Space I wanted to make something where you could pilot your own spaceship around a planet. Thus I moved my project to be a sci-fi, cockpit based game that involved conversation between the player and their ship’s AI and a space station attendant. I should probably note that this decision was also to help me, as this would also be the first time that I have used Unity.

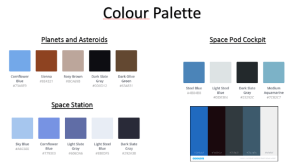

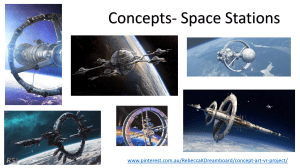

I started to build a dreamboard filled with art similar to where I wanted to go. This is a step I enjoy the most as I get to see the great art other have built, and it also allows me to conceptualise my direction. From here I then began to create my design template for when I actually started building my objects for the scene.

The following images are screenshots from Scrapbook 3 and show the process mentioned above.

Scrapbook 4

At this point in the project, I continued research into Voice Attack to understand how it works. I also began to research how to integrate the HTC Vive into Unity and I finally started to build objects.

When it came to building objects for the scene, as part of our assignment we had to build at least one object in Blender. This was not a fun experience for me, and I hope that I never have to return to that program ever again. But alas, I managed to build a space station that could be used. Because my time with Blender was not the best, I considered other ways that I could to have a spaceship.

I had a look at some open source 3D models, but I ended up having a go at the recently released (at the time) Google Blocks. Here I could build an object while in virtual reality. So I was ultimately using my hands to build this object, while also being able to walk around it. It was amazing! But my skills, not so good. I made a couple of test spaceships and ended with the one pictured below.

Check out my Google Blocks creations. Warning. They’re not that great.

Scrapbook 5

This final stage of the process involved bringing everything together and making it work in Unity. I watched hours and hours of tutorials, and the number one thing I learned from this, tutorials online are not that great if you don’t have a basic understanding of what you’re doing. So there were a couple of weeks of stabbing in the dark, crying in the corner, and desperate pleas to the lecturers (you guys were so patient, thank you!).

Eventually, this great big huge project that I wanted to make ended up being a rather simple concept prototype. Here are some final reflections:

- The voice commands did work, but only for me.

- The animation was not affected by any interaction, but I did get some basic animation going.

- The little tweaks, such as having the space station ring turn, improved the look.

- I have to keep reminding myself, for someone who had never touched Unity or voice commands before, this is okay for a 13 week project (which only had a few hours a week towards it).

One Sentence Pitch

Space Cargo is a sci-fi, virtual reality, interactive narrative that implements voice commands to immerses the user.

Story Summary

A cargo pilot is arriving at the Space Station Turing to accept a delivery. The user is required to open a channel to the space station to arrange docking. Once docked they are to accept the delivery and leave.

Final note: Sadly I never got to show this project off at the expo. But I am glad that I got to make it. Breakdowns and all.